ENVIRONMENT RENDERING SYSTEM

BrainGenix Environment Rendering System (ERS) aims to provide a virtualized environment for emulations. ERS will perform sensory translation to convert simulated sensory data into action potentials that can be sent directly to the emulation. It's designed to be used in conjunction with other BrainGenix systems, but can also be used as a standalone game engine.

GOALS

Our primary goal with ERS is to allow emulations to be embodied in virtual, controllable environments. In order to becoming functioning individuals, emulations must be able to interact with the world; rendered sensory data produced by ERS will give them the chance to be immersed in an environment. They will be connected to virtual avatars that allow them to access sensory input through neuroprosthetic-like feedback. ERS will also accept action potentials from the emulated brain and translate these into skeletal movement.

PROGRESS

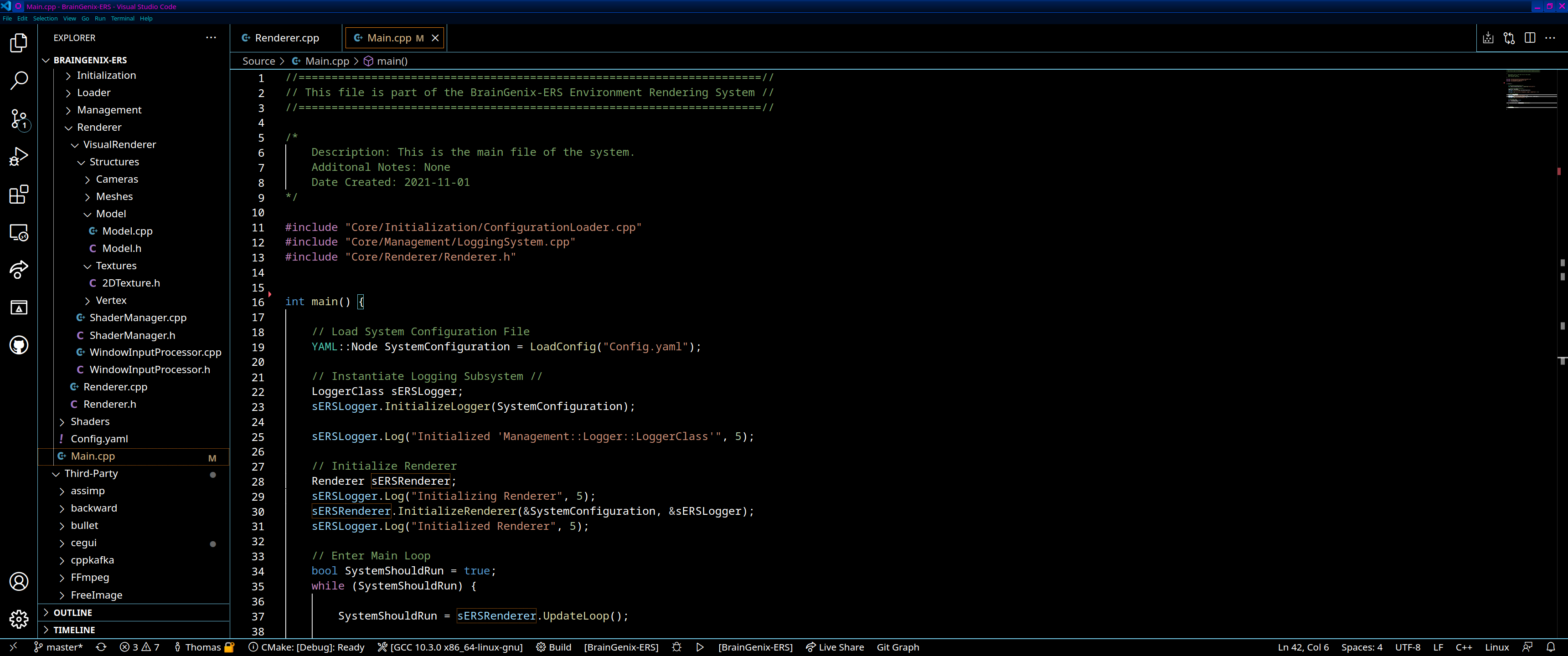

Currently, ERS has similar functionality to many other game engines. This includes an environment editor, model loading, textures, lighting, scripting and shader editing. We plan to continue expanding this functionality in order to build a rendering system from-the-ground-up which would emphasize multi-threading and other paradigms focused towards the datacenter. Keep up with our current progress on the ERS GitLab page and the Issues Board.